Just a few days ago, in my opening keynote at our European Identity & Cloud Conference I talked about the strong urge to move to more advanced security technologies, particularly cognitive security, to close the skill gap we observe in information security, but also to strengthen our resilience towards cyberattacks. The Friday after that keynote, as I was travelling back from the conference, reports about the massive attack caused by the “WannaCry” malware hit the news. A couple of days later, after the dust has settled, it is time for a few thoughts about the consequences. In this post, I look at the role government agencies play in increasing cyber-risks, while I’ll be looking at the mistakes enterprises have made in a separate post.

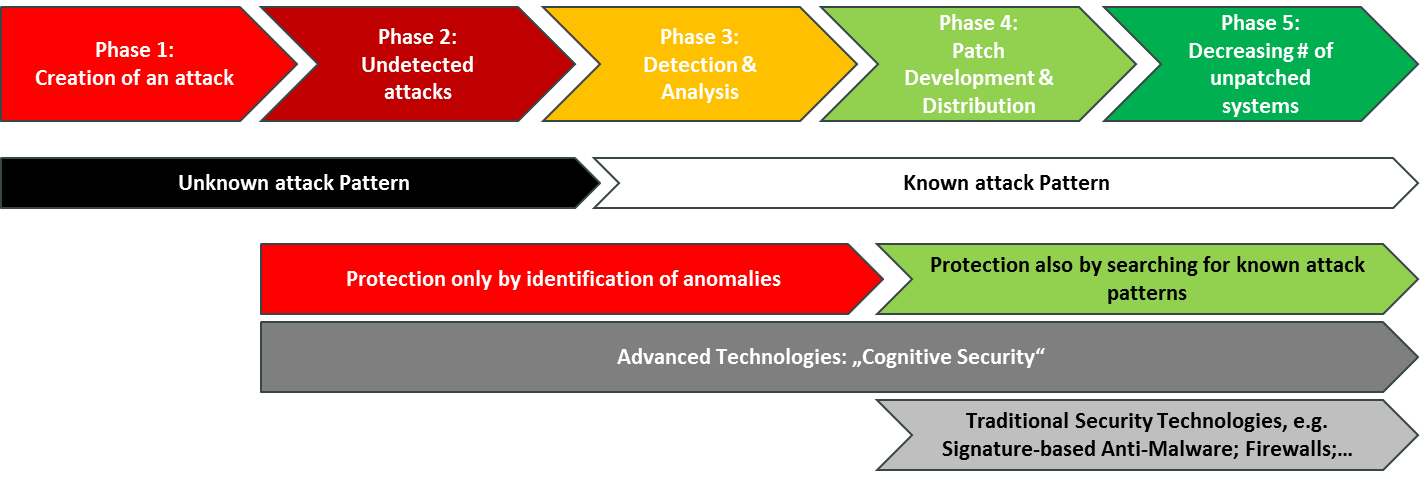

Let me start with publishing a figure I used in one of my slides. When looking at how attacks are executed, we can distinguish between five phases – which are more towards a “red hot” or a less critical “green” state. At the beginning, the attack is created. Then it starts spreading out and remains undetected for a while – sometimes only shortly, sometimes for years. This is the most critical phase, because the vulnerabilities used by the malware exist, but aren’t sufficiently protected. During that phase, the exploit is called a “zero-day exploit”, a somewhat misleading term because there might be many days until day zero when the exploit attacks. The term refers to the fact that attacks occur from day zero, the day when the vulnerability is detected and countermeasures can start. In earlier years, there was a belief that there are no attacks that start before a vulnerability is discovered – a naïve belief.

Here, phase 3 begins, with the detection of the exploit, analysis, and creation of countermeasures, most commonly hot fixes (that have been tested only a little and usually must be installed manually) and patches (better tested and cleared for automated deployment). From there on, during the phase 4, patches are distributed and applied.

Ideally, there would be no phase 5, but as we all know, many systems are not patched automatically or, for legacy operating systems, no patches are provided at all. This leaves a significant number of systems unpatched, such as in the case of WannaCry. Notably, there were alerts back in March that warned about that specific vulnerability and strongly recommended to patch potentially affected systems immediately.

In fact, for the first two phases we must deal with unknown attack patterns and assume that these exist, but we don’t know about them yet. This is a valid assumption, given that new exploits across all operating systems (yes, also for Linux, MacOS or Android) are detected regularly. Afterwards, the patterns are known and can be addressed.

In that phase, we can search for indicators of known attack patterns. Before we know these, we can only look for anomalies in behavior. But that is a separate topic, which has been hot at this year’s EIC.

So, why do I sometimes wanna cry about the irresponsibility and shortsightedness of government agencies? Because of what they do in phases 1 and 2. The NSA has been accused of having been aware of the exploit for quite a while, without notifying Microsoft and thus without allowing them to create a patch. Government organizations from all over the world know a lot about exploits without informing vendors about them. They even create backdoors to systems and software, which potentially can be used by attackers as well. While there are reasons for that (cyber-defense, running own nation-state attacks, counter-terrorism, etc.), there are also reasons against it. I don’t want to judge their behavior; however, it seems that many government agencies are not sufficiently aware of the consequences of creating their own malware for their purposes, not notifying vendors about exploits, and mandating backdoors in security products. I doubt that the agencies that do so can sufficiently balance their own specific interests with the cyber-risks they cause for the economies of their own and other countries.

There are some obvious risks. The first one is that a lack of notification extends the phase 2 and attacks stay undetected longer. It would be naïve to assume that only one government agency knows about an exploit. It might be well detected by other agencies, friends or enemies. It might also have been detected by cyber-criminals. This gets even worse when governments buy information about exploits from hackers to use it for their own purposes. It would be also naïve to believe that only that hacker has found/will find that exploit or that he will sell that information only once.

As a consequence, the entire economy is put at risk. People might die in hospitals because of such attacks, public transport might break down and so on. Critical infrastructures become more vulnerable.

Creating own malware and requesting backdoors bears the same risks. Malware will be detected sooner or later, and backdoors also might be opened by the bad guys, whoever they are. The former results in new malware that is created based on the initial one, with some modifications. The latter leads to additional vulnerabilities. The challenge is simply that, in contrast with physical attacks, there is little needed to create a new malware based on an existing attack pattern. Once detected, the knowledge about it is freely available and it just takes a decent software developer to create a new strain. In other words, by creating own malware, government agencies create and publicize blueprints for the next attacks – and sooner or later someone will discover such a blueprint and use it. Cyber-risk for all organizations is thus increased.

This is not a new finding. Many people including myself have been hinting about this dilemma for long in publications and presentations. While, being a dilemma, it is not easy to solve, we need at least to have an open debate on this and we need government agencies that work in this field to at least understand the consequences of what they are doing and balance this with the overall public interest. Not easy to do, but we do need to get away from government agencies acting irresponsibly and shortsighted.