Where we already benefit from AI

Efficiency, personalization, and transforming numerous parameters into an actionable insight are some of the benefits we have from AI.

Artificial intelligence is already embedded in our daily lives, often in ways that we barely notice anymore. In our personal lives, we benefit form recommendation engines, fraud detection for payment transactions, image classification of medical imaging, and natural language interaction with our smart devices. Organizations are harnessing AI as well, for anomaly detection in customer transactions and in IT security, augmented analytics for business intelligence and data-driven decision making, using AutoML to develop and monitor customized models for their own specific use cases.

AI Is Already Changing the World. It Is Our Duty to Make Sure It Will Be a Better One.

Because the disciplines that make up AI are many (natural language processing, machine learning, computer vision, and more), it can be difficult to summarize how AI is positively impacting our lives. But overall, it is assisting humans to make decisions where there is an abundance of data. It may partially automate processes or appear to be a sentient decision maker, but AI’s real value is from it’s supporting role; providing data-driven recommendation so that a human decision maker can make the best choice.

AI’s potential to ruin us

It is possible to have too much of a good thing…

Ai exists to help humans make wiser decisions… that sounds like a very happy coexistence. But how easy is it for humans just to sit back, and let AI do the heavy lifting? In a word – very.

Our own complacency and complicity in the development of AI has already proven stronger than our desire to make better informed decisions. There are already cases of false arrests, of biased hiring practices, of racially and gender discriminating facial recognition algorithms that perpetuate existing biases and injustices in our analog world into the digital one. Each situation was handled a bit differently, but an overarching trend is that a human decision maker relied on the recommendation of the algorithm without questioning its validity in the situation’s greater context. And when humans willingly hand over their decisions making power to machines, it will be difficult to take it back.

AI’s potential to disrupt just and contextually aware decision making is not the only issue. Humans are very susceptible to manipulation, and AI offers endless opportunities for manipulation. Deepfakes are looming threat, using ML techniques to generate a believable video that never occurred. These can easily alter an audience’s perception of an individual, event, company, or political leader. But manipulation of people can come from other sources as well, like algorithms that tend to yield results that users expect or push users towards more extreme opinions. Collective harm from manipulative algorithms is less studied than individual harm, but has the potential for much more disastrous outcomes.

How to keep AI on a leash

Ethical analysis of AI is the short, simplified answer. But unfortunately how to keep AI on a leash so that it benefits our society and business operations cannot be simplified. As an example, here is a list of the high-level metrics that most AI governance frameworks propose using:

- Level of human involvement in an algorithm’s decision making

- Agile involvement in an algorithm’s decision making

- Ethical treatment of individuals (should not remove access to basic human rights)

- Technical robustness of AI system

- Data privacy

- Accountability

- Legal legitimacy

- Social and environmental impact

- Human-centric

- Internal risk management

At first glance, this may look like a great list. If all AI systems are technically robust, display accountability, and remain human-centric and treat humans ethically, then there should be no problem, right? But what does it mean to treat humans ethically? Each one of these metrics must be defined at a detailed level in order to be enforced, but who has the authority to define ethical treatment of humans? There will be differences in definition across country and regional lines: of age, gender, individual versus collective wellbeing, health versus capital gain, risk adverse compared to risk-friendly, and more.

What we need is a collective effort to define our ethical needs and priorities as humans, reflective of our diversity. We need the support of technology philosophers to pose questions to us, and we must take the initiative to define what our future will contain.

Global initiatives to keep AI on track

There are several global initiatives to govern AI development. They are not legally binding regulations, but are rather statements of best practice and opinions that carry the reputation and influence of the group issuing it.

AI governance initiatives are not just state led. They come from academia, from independent research institutions, advocacy groups, private companies, from country working groups, and international organizations. Some of the noteworthy ones are:

- Layered Model for AI Governance (2017, Harvard)

- China’s New Generation AI Governance Principles (2019)

- OECD AI Principles (2019)

- EU Guidelines on Ethics in AI (2020)

- Google Perspective on Issues in AI Governance (2020)

- Singapore Model Framework (2020)

- Proposal for a legal framework for AI (European Commission, April 2021)

The one with the most teeth is the legal framework for Artificial Intelligence from the European Commission (April 2021). It must still be approved by the Council of the European Union and European Parliament before it is formally adopted, after which will have one and a half years before it comes into full force.

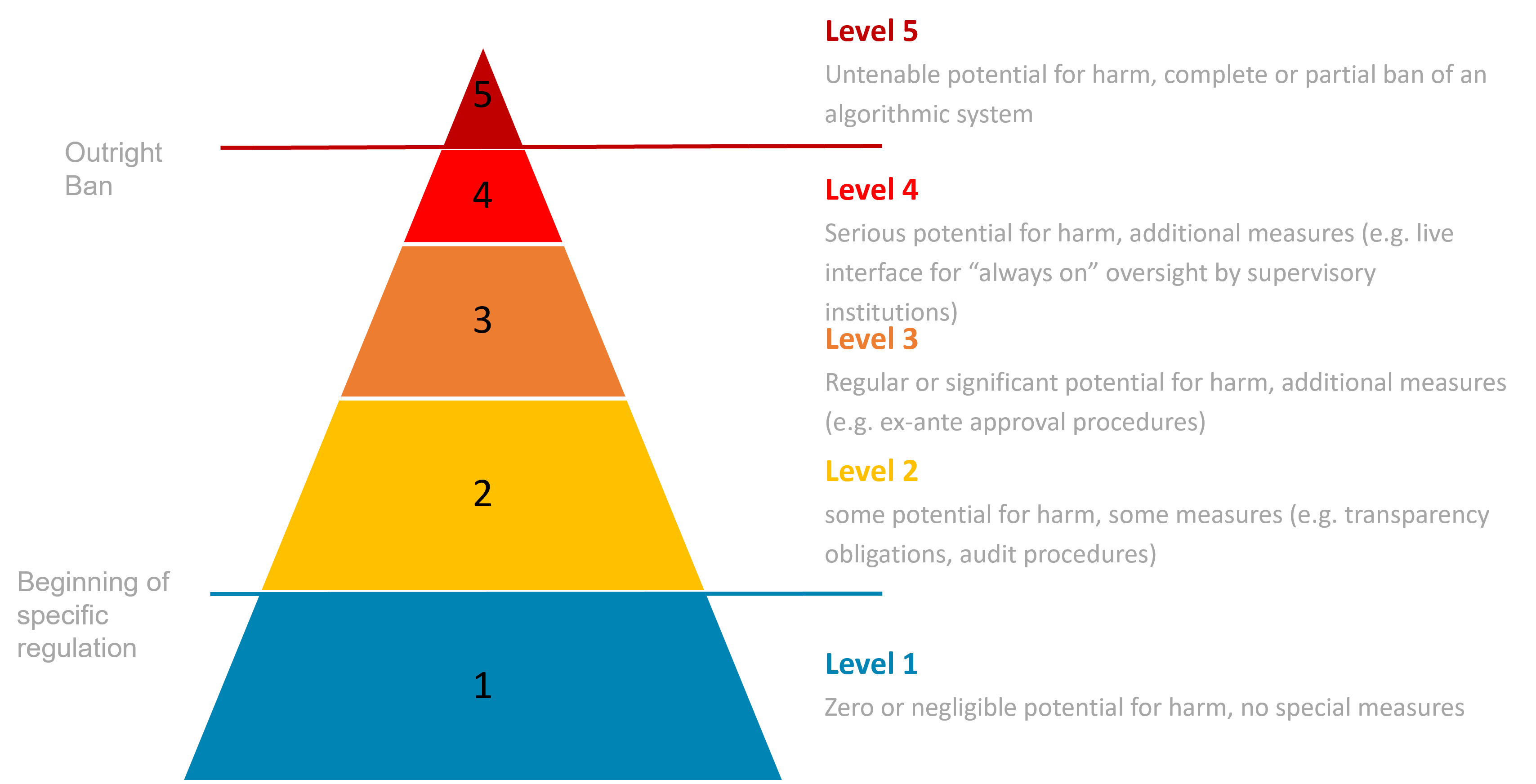

It proposes a tiered categorization of AI applications: those that create unacceptable risk, high risk, low risk, and minimal risk. Based on the level of risk each AI system is assigned, there will be different conditions that must be met for use on the European market or as it affects European Union residents.

Final critique

If regulations are not in place to keep AI in check, then we have to step in.

We are nowhere near the end of this story. AI technologies are rapidly developing and improving. Our fears around AI are solidifying, and regulation is nowhere near being able to protect us from those fears. This requires individual and corporate initiative to intentionally build a safe future with AI at our side. Organizations that are considering implementing AI in their processes should adopt an AI governance framework that critically assesses how the system improves business – and who it harms. Continually strive for transparency, so that those relying on an algorithm for decision making understand the weaknesses and best practices. And keep a multidisciplinary team on hand to consider the hard questions of ethical implementation.